Insights are always PHI-free, protected by Elev8Secure.

PHI-free by architecture.

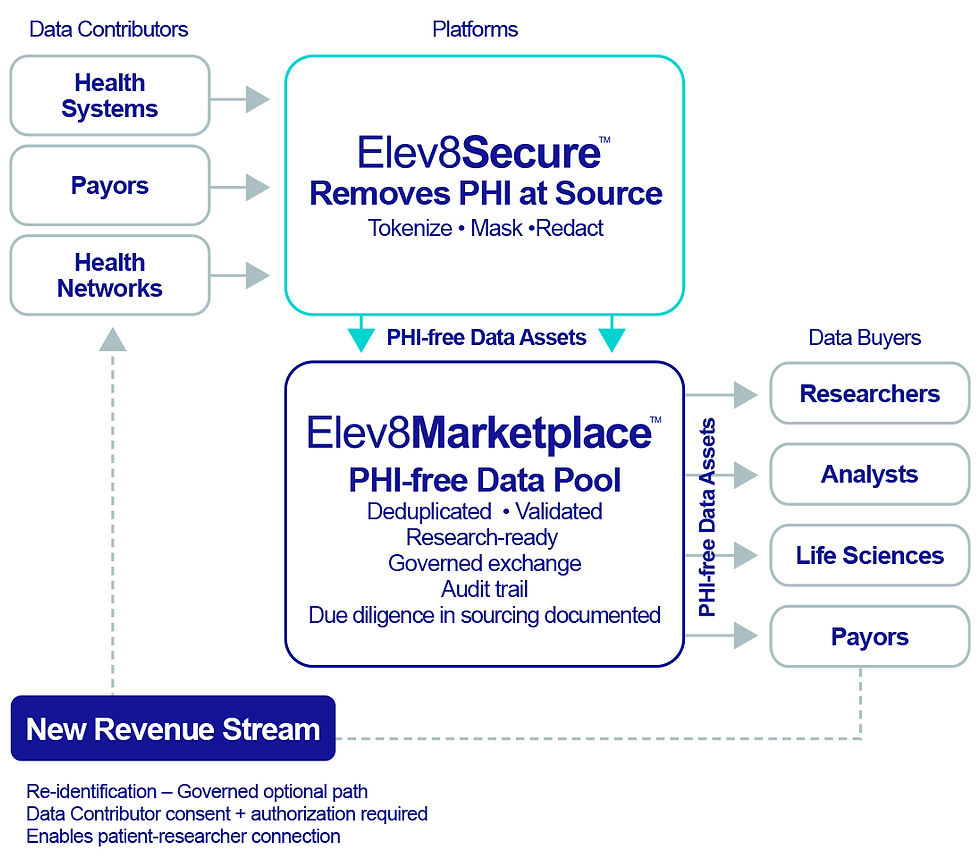

Elev8Secure strips all 18 HIPAA-defined identifiers at the field level before data leaves data contributors’ environment. What enters the marketplace is provably clean – tokenized, masked, or redacted. No PHI. No HIPAA breach reporting obligation. Powered by Elev8Secure patented technology.

You contribute. You earn.

Data contributors receive a pro-rata share of revenue based on the size and quality of their contribution to the pool. Elev8Data handles everything – marketing, licensing, delivery, and collections. You contribute once. You earn every time your data is licensed.

Your data. Your control.

If and only if a Data contributor consents to re-identification may tokenized data be re-identified. Any re-identification occurs only in accordance with the specific permissions configured by the contributor.

You buy data. You learn.

Data buyers have access to trusted, PHI-free data outside of their institutions, enabling AI development, research, and knowledge acquisition on a larger scale. Accelerate drug discovery, streamline clinical trials, and improve patient care using multi-institutional data. One easy agreement, knowledge unlocked.

Zero operational burden.

No new workflows. No new staff. No new infrastructure. Elev8Data brings together data buyers and data contributors and manages the full commercialization lifecycle from first sale to recurring revenue. Buyers license data under BAA-governed agreements. Contributors approve what's contributed. Data buyers license data they need. We handle the rest.

How it Works

Protect

Pool

Exchange

Elev8Secure tokenizes, masks, and redacts PHI at the field level as data leaves your environment. Purpose built to overcome the capabilities of bad actors which expose PHI when encryption is insufficient and security standards are deficient. You decide what's contributed and what stays proprietary. Carve out any proprietary data at any time.

Your PHI-free records join a shared marketplace of authentic, de-duplicated, validated patient data. Every contribution strengthens the pool. A richer pool means greater value — and greater revenue — returned to every contributor.

The more contributors, the richer the data. The richer the data, the more value to purchasers and contributors.

Credentialed buyers assemble datasets through a natural-language interface, with full visibility to the scope of available data before purchase. Elev8Data manages all commercialization. Contributors earn pro-rata recurring revenue based on quality, volume, and utilization.

Research-ready data for every buyer.

PHI-free, authentic, longitudinal health data – immediately usable, no remediation required.

Pharma & Biotech

Real-world evidence, drug discovery, clinical trial recruitment.

CROs & Research

Longitudinal, de-duplicated, multi-institution data without 6-month access timelines.

Women's Health

Fertility, maternal-fetal, and menopause data. Research designed around women's biology.

Medical AI

The only legally cleared source for training AI at scale. PHI-free by architecture.

Major Health Initiatives

Population health, health equity, SDoH, and chronic disease initiatives.

Cancer Research/Moonshots

Multi-institution, multi-tumor data aligned to national moonshot initiatives.

Protected PHI. Data Pooling. Value Creation for contributors and buyers.

The data you need. PHI-free. Growing every day.

PHI-free • Compliant by design • No breach exposure for buyers

Elev8Data manages all licensing, compliance, and access. You focus on delivery.

Healthspan: A Patient Scenario

A 54-year-old female presents with fatigue, metabolic drift & early inflammation markers.

Precision Medicine: Population to Patient

A health system needs treatment pathways for a complex multi-morbid cohort.

A clinician queries Elev8InsightsAI in plain language. In seconds, the system traverses validated genomic, metabolic & longitudinal data to surface a patient-specific intervention pathway — flagging modifiable risk factors ranked by evidence strength, not probability scores.

How Elev8InsightsAI Moves the Needle

Insight delivered in natural language — no query language required

-

Every recommendation traced to peer-reviewed source

-

Zero PHI leaves the secure environment

-

Clinician sees the why, not just the what

Elev8InsightsAI moves beyond population statistics. It derives patient-specific treatment pathways from validated causal relationships – accounting for drug interactions, comorbidities & genomic variance simultaneously – with deterministic outputs the care team can trust and defend.

How Elev8InsightsAI Moves the Needle

Causal pathways, not correlation-based guesses

-

Consistent output for identical clinical inputs — every time

-

Full transparency: source, logic & evidence chain visible

-

Immediate — no retraining cycle when guidelines update

Taxonomy

The classification system that brings order to medical knowledge.

Ontology

The relationship engine that connects medical knowledge into a coherent whole.

Semantic Layer

The intelligence interface that makes the system immediately usable.

What it Is

A rigorously structured hierarchy that classifies every medical concept – diseases, drugs, procedures, biomarkers, symptoms – into precise, unambiguous categories with defined relationships between them.

What it Does

Eliminates the synonym problem. “Hypertension,” “high blood pressure,” and “HTN” are one concept – not three. Every term maps to exactly one meaning, across every data source, every time.

What it Is

A formal map of how medical concepts relate to each other – causation, contraindication, mechanism of action, comorbidity, genomic association – validated against 4M+ peer-reviewed biological relationships.

What it Does

Enables the system to reason across domains. A drug does not just treat a disease – it inhibits a protein, which modulates a pathway, which affects a symptom cluster. Ontology makes that chain traversable and auditable.

What it Is

The translation layer between structured medical knowledge and the humans who need it. Natural language in. Deterministic, grounded, evidence-based insight out – with no LLM drift, no hallucination risk.

What it Does

A clinician asks a complex question in plain language. The Semantic Layer maps that question precisely onto the Taxonomy and Ontology – retrieving a verified answer, not a probabilistic approximation.

-

No ambiguity in how medical concepts are defined

-

Consistent classification across EHRs, labs, genomics & claims

-

Structured foundation that makes every query answerable – not estimated

-

-

Relationships are validated, not inferred from training data

-

Causal pathways replace probabilistic correlations

-

Every connection is explainable, traceable & defensible

-

-

NLP front door – no query language or training required

-

Outputs grounded in validated facts, not generated text

-

Same question, same answer – every time, for every user

-

Taxonomy + Ontology + Semantic Layer = Intelligence you can trust. Insights you can act on.

Why is healthcare still betting patient outcomes on LLM-based, probabilistic AI?

LLMs generate answers by predicting the next statistically-likely word after being trained on billions of internet documents that include misinformation, retracted studies, and unvetted community commentary on health issues. Potentially dangerously, LLMs speak authoritatively about things they’ve entirely made up, making fictions sound like facts. RAG reduces LLM hallucinations, but it does not eliminate them. Is this the technology you want guiding your healthcare decisions?

Critical Questions Lack Reliability

No Chain

of Evidence

Reasoning without Accountability

Compounding Cost of Staying Current

Probabilistic, not Deterministic

While LLMs may return reasonably consistent answers to simple, often-asked questions, the same cannot be said for questions involving complex, multivariate clinical cases – things like drug interactions across comorbidities, off-label drug use, rare disease presentations, and more. When questions are complex or hard, LLMs can return meaningfully different answers, even if the queries are structured identically. In healthcare, inconsistency is liability.

LLMs and RAG systems treat a landmark, peer-reviewed trial and an unreviewed preprint as equivalent inputs to their decision making. There is no mechanism to weight, flag, or filter by validations status, and as a result, clinicians who use LLMs and RAG systems receive answers with no way to know whether the source is FDA-approved guidance, a decades-old study, a retracted and erroneous paper, or some unexplained amalgamation of all of them together.

LLMs generate plausible-sounding explanations for their answers that are in reality themselves probabilistic outputs, not traceable logic. The derivation of the answer provided by the LLM might be rational and follow human-like reasoning, or it might be completely random and arbitrary. There is no path from the LLMs answer back to its sources, no explainability, no auditability, and no defensibility.

LLMs encode medical knowledge using model weights, and when medical knowledge evolves – perhaps guidelines are updated, or new therapies emerge – then the model is instantly wrong. Keeping an LLM model current requires expensive, compute- and time-consuming retraining. A full retraining can cost upwards of $100 million, plus compounding inference costs at scale, and the LLM will continue to return outdated answers while the model is being retrained.

RAG is designed to add a probabilistic retrieval step before a probabilistic generation step, which means the system guesses which documents are relevant, and then guesses what to say about them. In short, RAG systems are architected to stack two layers of uncertainty together to generate a certain-sounding answer. Who wants a healthcare AI system built on guess upon guess?

Why is healthcare still betting patient outcomes on LLM-based, probabilistic AI?

LLMs generate answers by predicting the next statistically-likely word after being trained on billions of internet documents that include misinformation, retracted studies, and unvetted community commentary on health issues. Potentially dangerously, LLMs speak authoritatively about things they’ve entirely made up, making fictions sound like facts. RAG reduces LLM hallucinations, but it does not eliminate them. Is this the technology you want guiding your healthcare decisions?

While LLMs may return reasonably consistent answers to simple, often-asked questions, the same cannot be said for questions involving complex, multivariate clinical cases – things like drug interactions across comorbidities, off-label drug use, rare disease presentations, and more. When questions are complex or hard, LLMs can return meaningfully different answers, even if the queries are structured identically. In healthcare, inconsistency is liability.

Critical Questions Lack Reliability

LLMs and RAG systems treat a landmark, peer-reviewed trial and an unreviewed preprint as equivalent inputs to their decision making. There is no mechanism to weight, flag, or filter by validations status, and as a result, clinicians who use LLMs and RAG systems receive answers with no way to know whether the source is FDA-approved guidance, a decades-old study, a retracted and erroneous paper, or some unexplained amalgamation of all of them together.

No Chain

of Evidence

LLMs generate plausible-sounding explanations for their answers that are in reality themselves probabilistic outputs, not traceable logic. The derivation of the answer provided by the LLM might be rational and follow human-like reasoning, or it might be completely random and arbitrary. There is no path from the LLMs answer back to its sources, no explainability, no auditability, and no defensibility.

Reasoning without Accountability

LLMs encode medical knowledge using model weights, and when medical knowledge evolves – perhaps guidelines are updated, or new therapies emerge – then the model is instantly wrong. Keeping an LLM model current requires expensive, compute- and time-consuming retraining. A full retraining can cost upwards of $100 million, plus compounding inference costs at scale, and the LLM will continue to return outdated answers while the model is being retrained.

Compounding Cost of Staying Current

RAG is designed to add a probabilistic retrieval step before a probabilistic generation step, which means the system guesses which documents are relevant, and then guesses what to say about them. In short, RAG systems are architected to stack two layers of uncertainty together to generate a certain-sounding answer. Who wants a healthcare AI system built on guess upon guess?

Probabilistic, not Deterministic

No other marketplace eliminates PHI.

Every competitor — Oracle, Truveta, UK Biobank — de-identifies or uses Safe Harbor stripping. PHI is still in the environment, managed under access controls. Elev8Marketplace, powered by Elev8Secure, eliminates PHI before data enters the marketplace. There is nothing to breach or subpoena.

PHI eliminated, not obscured

BAA-governed

EHR-agnostic

Authentic data, not synthetic

Direct Revenue to contributors

Compliance:

21st Century Cures Act

HIPAA

SOC2

HITRUST